MAR

21

Sat, 21 Mar

Online

0

days

0

hours

0

min

0

sec

About

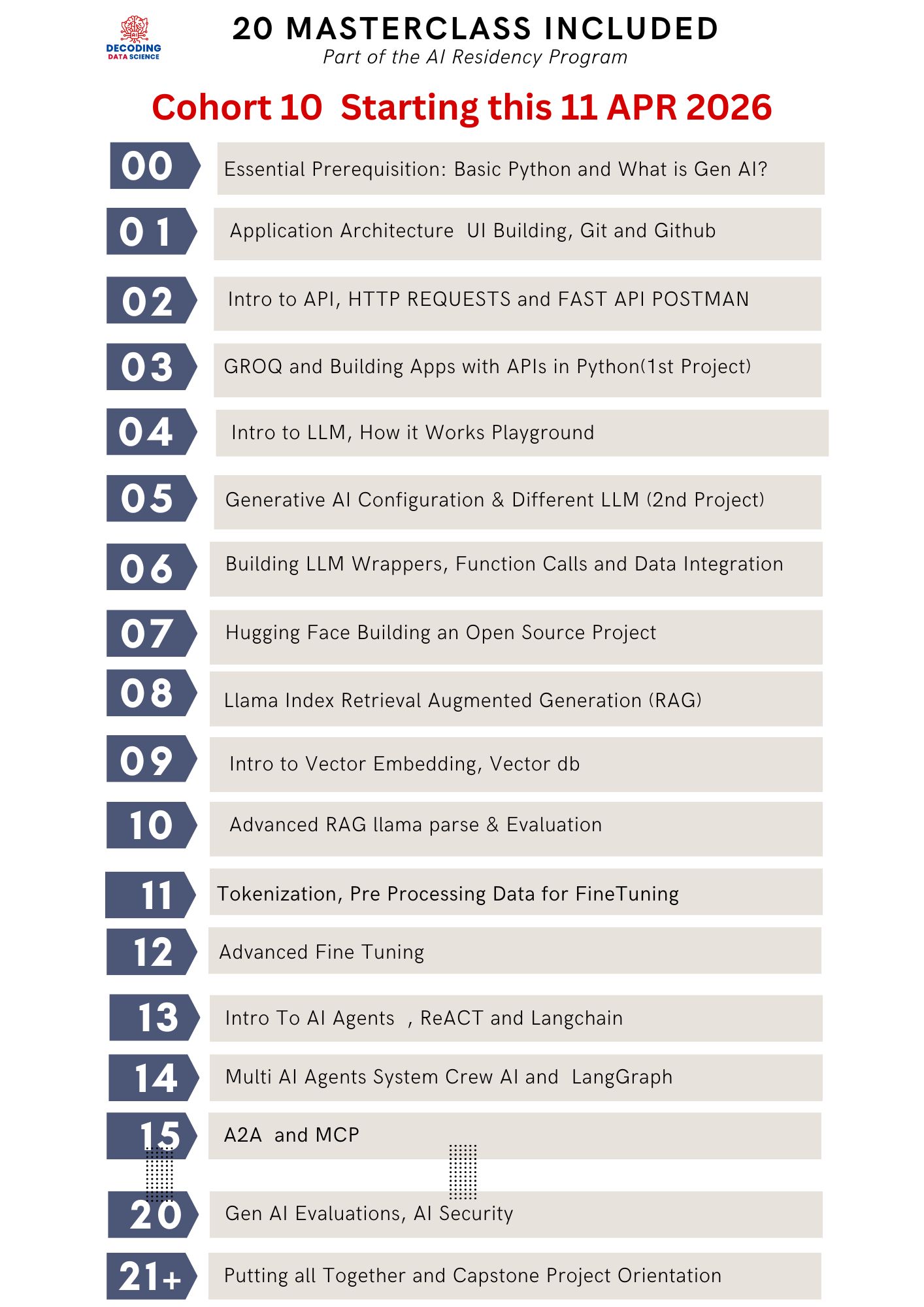

(Part of the AI Residency Program – End-to-End AI Project Lifecycle)

Overview

This module focuses on building production-grade Retrieval-Augmented Generation (RAG) systems using LlamaIndex, one of the most powerful frameworks for connecting LLMs with structured and unstructured data.

As part of the AI Residency, participants will not only implement advanced RAG pipelines but also understand how these systems fit into the complete lifecycle of an AI project—from data ingestion to evaluation and optimization.

What You Will Learn

1. Advanced RAG with LlamaIndex

- Building RAG pipelines using LlamaIndex

- Data ingestion pipelines (PDFs, APIs, structured + unstructured sources)

- Node parsing, chunking strategies, and document indexing

- Working with vector stores (Chroma, Pinecone, FAISS) via LlamaIndex

- Query engines and composable retrieval pipelines

2. Retrieval Optimization

- Query transformation and routing

- Metadata filtering and structured retrieval

- Multi-step retrieval (sub-questions, decomposition)

- Re-ranking and improving relevance

3. Evaluation & Observability

- Evaluating RAG outputs (faithfulness, relevance, correctness)

- Creating test datasets and evaluation pipelines

- Debugging retrieval failures

- Reducing hallucination through grounded responses

4. Introduction to Fine-Tuning

- When to use RAG vs Fine-Tuning vs Prompt Engineering

- Overview of fine-tuning techniques (LoRA, instruction tuning)

- Dataset preparation and labeling strategies

- Cost vs performance trade-offs

5. System Design: RAG + Fine-Tuning

- Designing enterprise AI applications (chatbots, copilots, assistants)

- Combining retrieval with fine-tuned models

- Scaling AI systems for real-world use cases

Outcome

By the end of this module, participants will:

- Build a fully functional Advanced RAG system using LlamaIndex

- Understand how to design, debug, and optimize retrieval pipelines

- Gain clarity on when to use RAG vs Fine-Tuning in production

- Develop skills to build real-world AI applications (not just demos)

- Understand the end-to-end lifecycle of an AI project, from data → model → evaluation → deployment

Key Takeaways

- LlamaIndex enables structured, modular, and scalable RAG systems

- Advanced RAG is about retrieval quality + system design, not just embeddings

- Fine-tuning should be used only when RAG and prompting are insufficient

- Evaluation is a core component, not an afterthought

- The real skill is understanding the full AI lifecycle, not just calling APIs

Positioning within AI Residency

This module ensures participants transition from:

“Calling LLM APIs” → “Building AI Systems with Data + Retrieval + Evaluation”

It is where participants start thinking like:

- AI Engineers

- System Designers

- Product Builders

This masterclass is part of the AI Residency.

✅ Join the new AI Residency cohort to build this end-to-end with guided support, project feedback, and a production-ready workflow—from data ingestion → indexing → retrieval → evaluation → deployment.

https://academy.decodingdatascience.com/airesidencyfasttrack

Location