MAR

28

sáb, 28 mar

En línea

0

días

13

horas

34

minutos

46

segundos

Acerca de

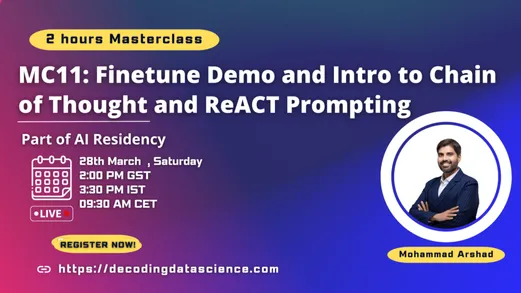

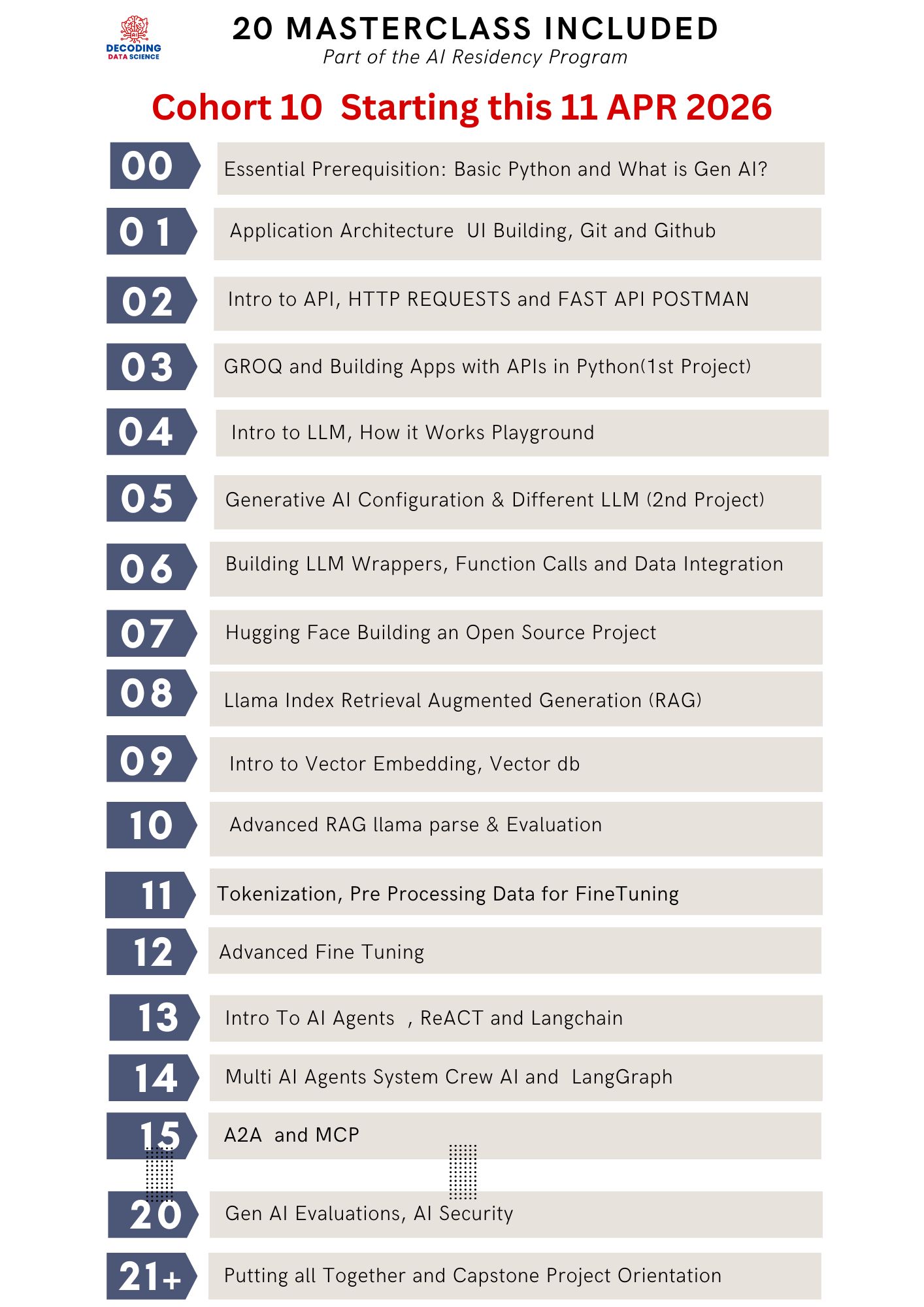

(Part of the AI Residency Program – End-to-End AI Project Lifecycle)

Overview

In this masterclass, participants will explore important techniques that help large language models generate more structured, logical, and useful responses, including Chain of Thought and ReAct Prompting. The session will show how to guide models to reason through problems step by step, and how to combine reasoning with actions such as using tools, retrieving information, and making decisions in a more deliberate way.

Along with prompting techniques, the session will also include a hands-on LLM fine-tuning demo, where participants will learn how to fine-tune a small language model using an instruction-based dataset. The demo will walk through the practical workflow of preparing the dataset, loading the model, configuring the training process, and running the fine-tuning in a cloud-based environment through a supporting framework.

Through practical examples and discussion, participants will understand when these techniques are useful, how they improve model performance and reliability, and how they support the development of more capable AI applications and agents. This session is ideal for learners who want to move beyond basic prompting and gain exposure to both reasoning-oriented prompt design and the foundations of adapting models through fine-tuning.

This masterclass is part of the AI Residency.

✅ Join the new AI Residency cohort to build this end-to-end with guided support, project feedback, and a production-ready workflow—from data ingestion → indexing → retrieval → evaluation → deployment.

https://academy.decodingdatascience.com/airesidencyfasttrack

Ubicación